Let agent play with agent in shell script

Motivation

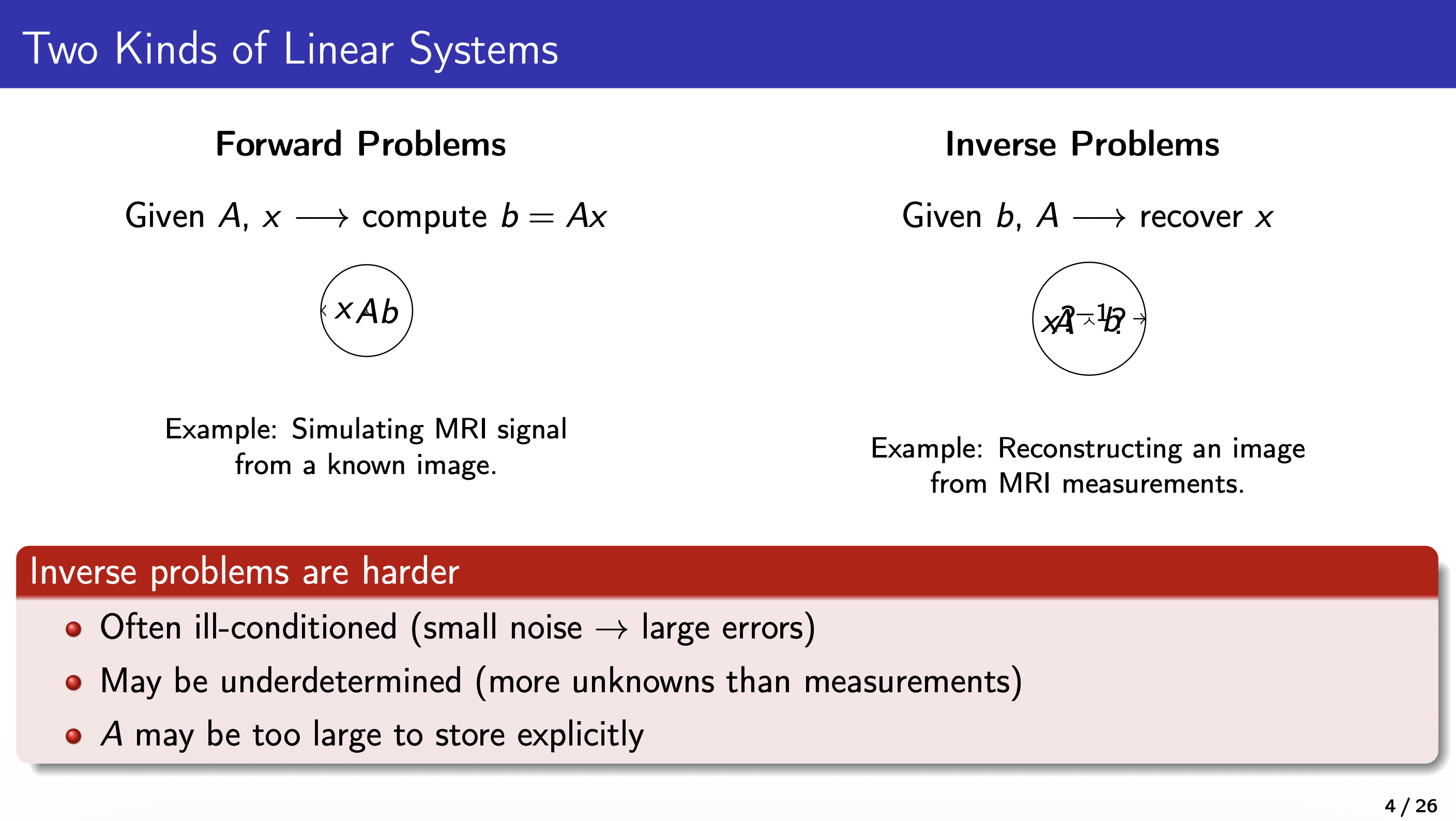

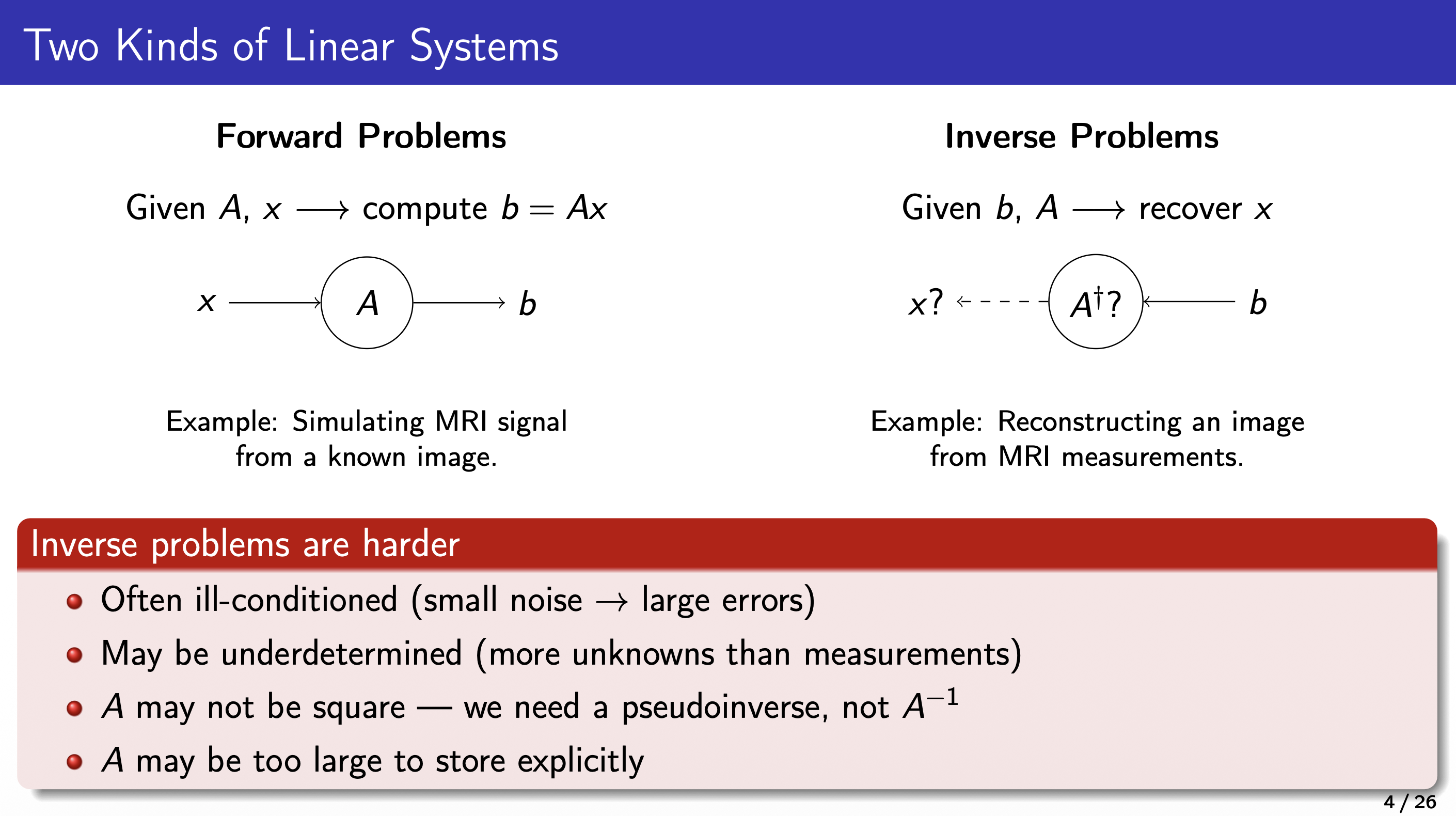

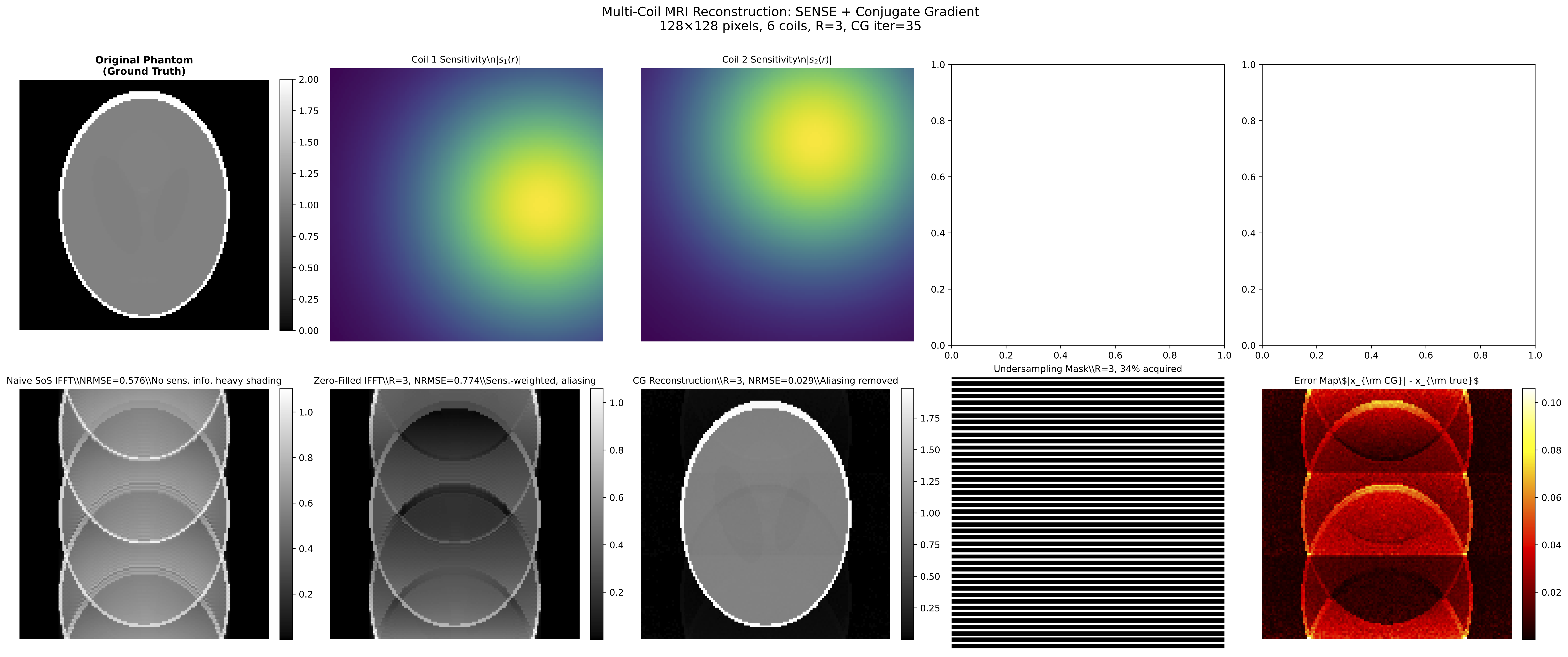

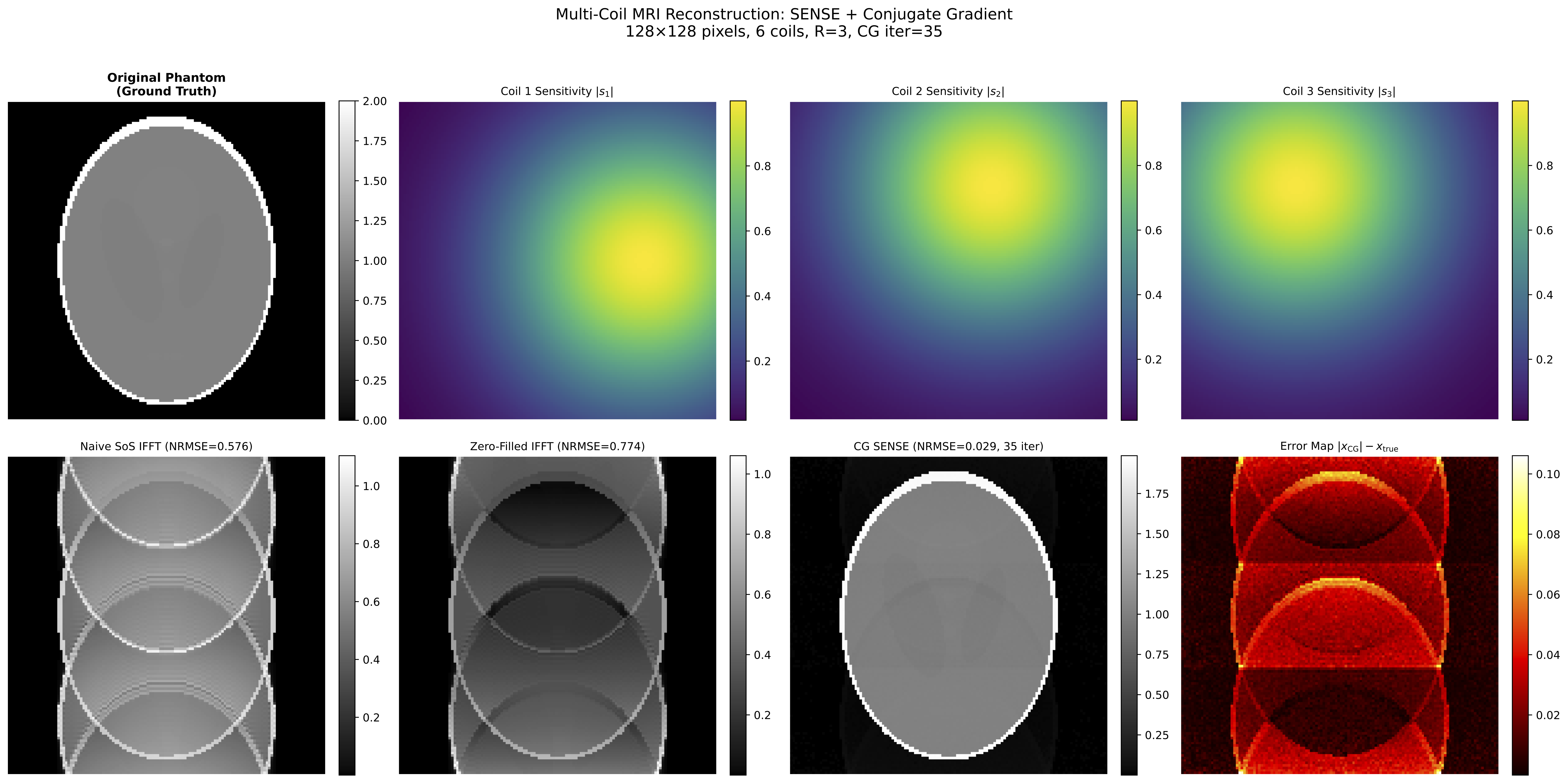

Deepseek V4 was released few days ago. I wanted to test the capability of Deepseek to prepare lecture slides and course materials on the Conjugate Gradient algorithm for MRI reconstruction. Rather than iterating manually, I wondered: can I have one agent create the materials and another agent review them, then loop this automatically? The answer turned out to be a ~400-line shell script.

The idea is simple: wrap the claude CLI in a bash for loop, assign different roles (Student and Teacher) to each invocation, and let them coordinate through files on disk. Three rounds of back-and-forth produced a 26-page LaTeX Beamer lecture and a working multi-coil MRI reconstruction demo. Here is how it works.

Architecture

for round in 1..3:

┌────────────┐ ┌────────────┐

│ STUDENT │ ─produces─▶ │ TEACHER │

│ (agent) │ │ (agent) │

└────────────┘ └────────────┘

▲ │

│ feedback file │

└───────────────────────────┘

Each invocation of claude -p runs as a separate process with no shared memory. The only communication channel is the filesystem: the Student writes slides and code, the Teacher reads them and writes a review to feedback/round-N.md, and the Student reads that review in the next round.

The shell script at a glance

ROUNDS=3

for round in $(seq 1 $ROUNDS); do

# Phase 1: Student creates or improves materials

(cd "$WORK_DIR" && claude \

--allowedTools Read,Bash,Python,WebSearch,WebFetch,Write \

-p "$STUDENT_PROMPT")

# Phase 2: Teacher reviews and writes feedback

(cd "$WORK_DIR" && claude \

--allowedTools Read,Bash,Python,WebSearch,WebFetch,Write \

-p "$TEACHER_PROMPT")

done

The real script adds resume support, progress tracking, verification gates, and git commits after each phase. Full script on GitHub.

Role design

The two agents are defined entirely through their prompts. No code difference — just different system instructions.

Student prompt specifies:

- Exact deliverables (LaTeX slides with named sections, Python scripts with specific figure outputs, MRI demo with from-scratch CG)

- Target audience (undergraduates with basic linear algebra)

- Format constraints (

\begin{align*},\includegraphics,if __name__ == "__main__") - In rounds 2–3: path to the Teacher’s feedback file and instruction to address every point

Teacher prompt specifies:

- All files to review (slides, illustration code, MRI demo, figures, LaTeX compilation)

- Seven review criteria (technical accuracy, pedagogical clarity, visual quality, code quality, LaTeX quality, completeness, MRI application)

- Structured output format with severity levels (Critical / Important / Minor)

- A hard requirement to use the Write tool to save

feedback/round-N.md

What I learned

1. Files are the universal interface. Two CLI invocations share no state. The only way to pass information is through files on disk. This turns out to be a feature: the feedback files create a natural audit trail, and crash recovery is trivial — just read the progress file and resume.

2. Agents sometimes talk instead of write. The Teacher occasionally outputs its review as chat text rather than calling the Write tool. The fix is a fallback: capture stdout, strip ANSI codes, save it as the feedback file. If both Write and stdout are empty, exit with an error rather than silently advancing.

3. CWD matters. Early runs had feedback files landing in the script’s working directory instead of $WORK_DIR/feedback/. Wrapping each claude call with (cd "$WORK_DIR" && claude ...) fixed this. Absolute paths in prompts (for the feedback file path) add a second layer of safety.

4. Three rounds is about right. One round catches the big problems (undefined LaTeX commands, crashing scripts). Two rounds refines the pedagogy (wrong figure on wrong slide, missing definitions). Three rounds polishes the details (overfull boxes, CLI flags, edge-case comments). A fourth round would probably hit diminishing returns.

Results

The generated materials

The final output is a self-contained lecture package:

materials/

├── slides/

│ ├── lecture.tex # 788-line LaTeX Beamer source

│ ├── lecture.pdf # Compiled 26-page PDF

│ └── figures/ # 9 vector figures

├── code/

│ ├── illustrations/ # Figure-generation scripts

│ └── mri_demo/ # Multi-coil SENSE reconstruction

├── feedback/

│ ├── round-1.md # Teacher review: 3 critical + 8 important

│ ├── round-2.md # Teacher review: all critical resolved

│ └── round-3.md # Teacher review: approaching lecture-ready

└── requirements.txt

Not bad for a shell script that just calls claude -p in a loop.

Running your own

The script is topic-agnostic. Edit the three prompt variables and the CLAUDE.md to change the subject. The same loop structure works for any task that benefits from iterative review: documentation, code review, lesson plans, grant proposals.

git clone git@github.com:ggluo/claude-by-claude.git

cd claude-by-claude

./create-slides.sh # start from scratch

./create-slides.sh --resume # resume after a crash

Enjoy Reading This Article?

Here are some more articles you might like to read next: